Machines have pervaded our daily lives, and so we need to interact with them all the time. Over the centuries, the way we interact has dramatically

changed since the machines have evolved from pure mechanical tools to complex robots endowed with humanoid capabilities. Robots have already started to leave factories and begin to enter our schools, workplaces, and homes.

Of course, it makes most sense that people should be able to interact with robots in ways that are comfortable and natural to them. A common key challenge of robots or Artificial Agents (AA) is to set up a contingent interaction. This means that Artificial Agents should not only react to human actions, but that they should react in ways that are congruent with the emotional and psychophysiological state of the human user or interlocutor.

The latter aspect is especially relevant for so-called social robots: robots devoted primarily to interact with human interlocutors. Take museum tour-guide robots and robots that interact with the elderly, for example.

READING HUMAN EMOTIONS

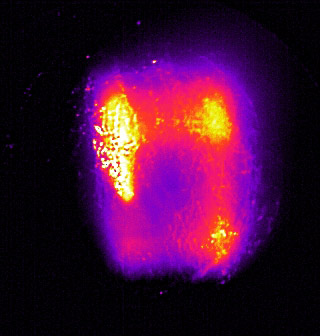

To endow artificial agents with the capability of reading and interpreting human psychophysiological and emotional states represents a major issue in the field of human-machine interaction. Typically, monitoring psychophysiological and emotional states is performed through the measurements of several autonomic nervous system (ANS) parameters, like skin conductance response, hand palm temperature, heart beat and/or breath rate modulations, peripheral vascular tone and facial expression.

Follow us on Linkedin!

Follow us on Linkedin!